Introduction

Have you ever had that weird, prickly feeling that someone is watching you? Now, imagine that feeling following you for eight hours straight, every single day. But here’s the thing: it’s not a human boss staring over your shoulder; it’s an invisible line of code buried in your laptop. Back in the day, a man named Jeremy Bentham devised the Panopticon—a prison designed so that inmates never knew exactly when they were being watched. Fast forward to 2026, and we’ve basically built that prison out of software. We are now what I call "Glass Employees." Every click of your mouse, every tiny facial twitch during a Zoom call, even the rhythm of your typing—it’s all being turned into data points. We aren't just tracking if people show up anymore; we’re trying to track how their brains work. And honestly? It makes me wonder: can you really have a brilliant idea when you feel like a lab rat in a digital cage? As we rethink what human dignity looks like in this AI age, we have to stop and ask: where does "efficiency" end and our right to just think in peace begin?

The Engineering Vision: Why Did We Even Build This?

To understand why we ended up here, we have to look at what the people who designed these systems were actually thinking. They weren’t trying to be the "bad guys" in a sci-fi movie. It actually started with a pretty noble goal: Evidence-Based Management. The idea was simple: if you can’t measure it, you can’t fix it. Developers wanted a world where a boss’s bad mood or personal bias didn't decide who got a promotion. They used Predictive Analytics, hoping to spot burnout before it even happened. The dream was a workplace where data provided the kind of objective support that humans often fail to give.

But this is where the logic hits a wall. This "digital mindset" treats our brains like computer processors that should run at 100% capacity all day long. Even the European Commission (JRC) is raising red flags about "Hyper-surveillance," noting how systems now track eye movements during meetings. I’ve heard of companies that flag you just for pausing too long between emails. While an engineer sees this as "fixing a leak" in productivity, they’re missing a huge biological fact: humans need to daydream. That "idle time" is actually when we solve the hardest problems. If you strip away that context, you aren't making a better office; you’re just building a data factory. If we keep choosing "constant digital movement" over "actual great ideas," we’re going to lose the very innovation we were trying to measure in the first place.

The Psychological Cost: From Trust to Constant Stress

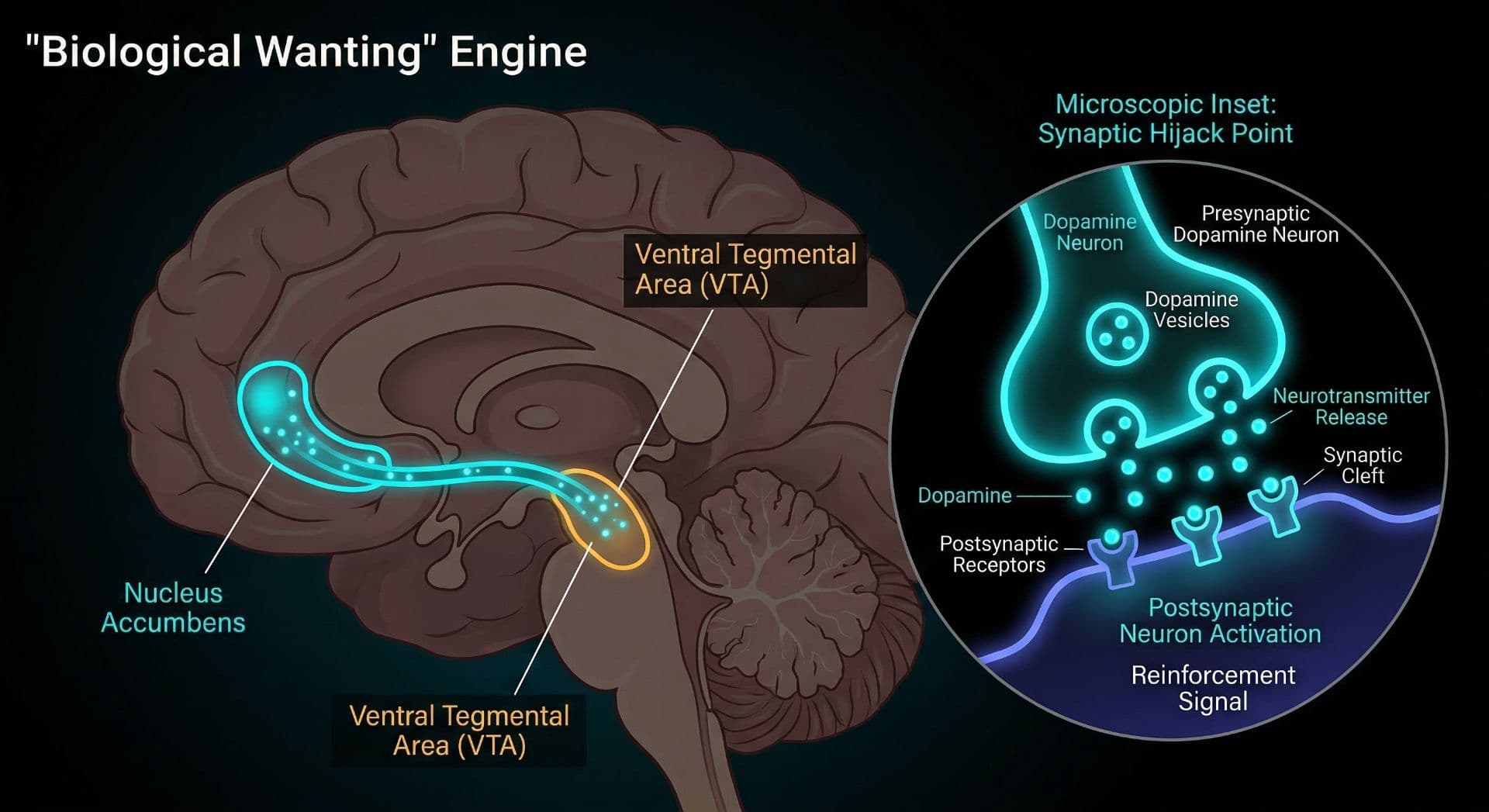

Let’s be real for a second: nobody does their best work with a digital microscope over their head. "Psychological safety" is a fancy term we hear a lot lately, but it really just means feeling safe enough to take a risk or mess up without being punished for it. But when you're being monitored 24/7, your brain stays in a state of "threat response." That little part of your brain called the amygdala goes into overdrive. According to the Harvard School of Public Health, this kind of pressure is a massive psychological stressor. It completely kills the basic trust that makes a team actually work together.

So, what do we get instead of innovation? We get "Defensive Compliance." This is where you stop trying to be brilliant, and you just focus on not looking "bad" to the algorithm. The biological toll is actually pretty scary; constantly high cortisol levels lead you straight to burnout. You start feeling like a tiny cog in a massive machine, working to satisfy a number instead of creating something of value. If you’re too scared to think because you know you’re being watched, your creativity just shuts down. True excellence needs the freedom to fail in private—a luxury that the "Glass Employee" no longer has.

Productivity Theater: Why Watching People Always Backfires

The biggest irony in all of this? All this monitoring usually fails to make things better. Instead, it creates what experts call Productivity Theater. As Argaam has pointed out, when employees feel this much pressure, they spend more energy acting busy than actually doing the work. People start using "mouse jigglers" just to keep their status green on Slack or Teams. It’s not because they’re lazy; it’s a survival tactic. It’s a way to fight back against a system that has zero empathy for the human process.

We also have to talk about Data Sovereignty. I mean, who actually owns the data from your typing speed or the expressions on your face? Rules like the GDPR say that just being "transparent" isn't enough; companies have to prove they actually need all this data to do business. The OECD is now pushing for Algorithmic Transparency. Why aren't we using AI to help us instead of just leashing us? Imagine a system that tells you to take a walk because it senses your stress levels are peaking. The future of work should be built on trust, where technology supports our potential rather than trying to control every second of it.

Conclusion: A Smarter Office or a High-Tech Cage?

In the end, I believe AI is just a mirror. It reveals what a company truly values. We are standing at a crossroads right now: are we going to build a digital Panopticon full of anxious, exhausted workers, or a support system that actually helps humans thrive? You can’t have real efficiency without the privacy to think and the safety to fail. As our work and personal lives continue to blur, the success of AI shouldn't be measured by data points, but by trust. So, what’s it going to be? Are we making our offices smarter, or are we just designing more sophisticated cages

Discussion