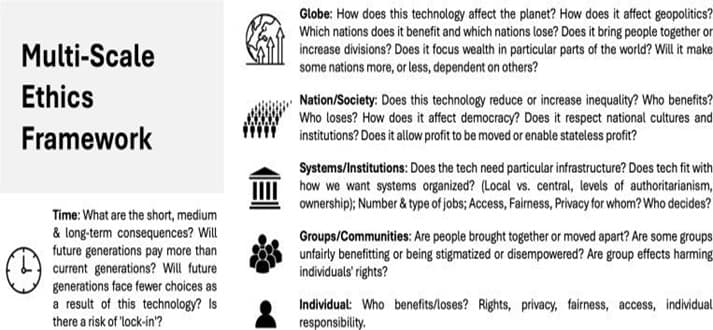

With the potential of AI tools to revolutionize the world of healthcare, there are ethical risks that need to be considered before implementation. The interpretable model offers an alternative to the problem that, amongst many other concerns, undermines informed consent, but is not a complete solution when it involves generative AI and large language models (LLMs). For the clinical implementation of the AI, the following needs to be evaluated before determining the results. The first risk factor is technical robustness, the second is implementation feasibility, and the third is analysis of harms and benefits. Because of these concerns and more that need to be addressed, many areas of medicine have been slow to adopt the AI - based tools into practice.

It is difficult for clinicians to determine, based on the current evidence, whether AI is justified to be used clinically, particularly regarding the medical accuracy of its output, the risk of hallucinations, and the technical feasibility of implementing it into existing IT systems. The adaptability of the AI systems has been slow for adaptation into medicine, especially looking into the lack of clarity regarding patients’ informed consent.

There is difficulty in assessing the effectiveness and reliability of adaptable AI systems due to biased or missing data across different subgroups, which further perpetuates the pattern of historical discrimination. Due to the need for performance across subgroups, the predictability of future use of data is not clear, and that can lead to breaches of patient privacy and confidentiality as AI requires a set of values for programming. Due to this reason, bringing a balance between AI research and its real-world application remains a challenge for the practitioners and the policy-makers. At this time, the interpretability of the AI system and the production of accurate and reliable clinical output is a balance to be struck that is a work in progress.

Technical Assessment Non-maleficence

In the technical assessment of AI, given the preceding reason, the scientific readiness of the system is the justification for the technology to address its intended clinical purpose. The establishment of a reasonable claim in favor of its clinical use is the evidence that can provide insight into the extensive validation of a system’s practical use and effectiveness.

Implementation Feasibility (Justice)

The implementers for this purpose can look into the general purpose use of many other AI tools outside of medicine,e such as finance, education, and engineering, to justify the clinical use/s of technology and the intended purpose,s and not just look into the model’s technical feasibility.

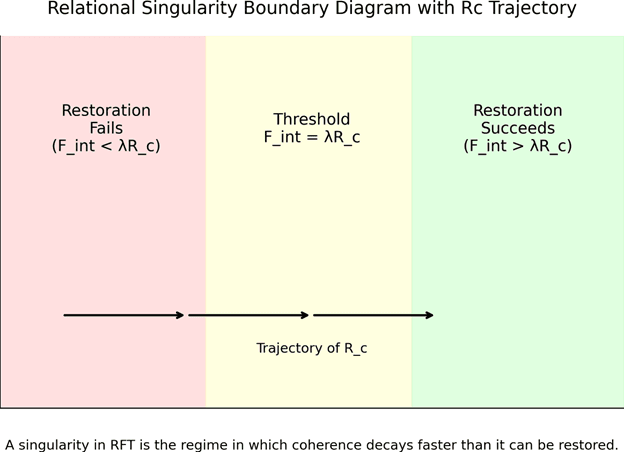

Any potential failure during the implementation of the system, i.e., clinical practice, should be looked into. The presence of these failures does not necessarily indicate that the AI system should be rejected, but rather that attempts need to be made to mitigate their effects and find alternative solutions.

Risk Evaluation (Beneficence/Non-Maleficence)

The determination of risk and expected benefits of implementing an AI system into clinical practiceise justifiable. This can be done by establishing the potential harms and benefits associated with the clinical implementation of the system. To evaluate risk and expected benefits, there are three factors to be considered:

Probability of harms and benefits

This refers to the likelihood that a harm or benefit will occur with the use of the AI system. Clinical trials, historical data, and expert opinions can be used to understand the probability of cause and solution.

Nature of harms and benefits

This refers to the severity or magnitude of an AI system’s output,t subjectively by the patient’s preference or objective.ly

Uncertainty

This factor accounts for the level of confidence in the probability and the nature of outcome estimates. Higher uncertainty means less confidence in the estimates, which can also be due to limited data, low-quality evidence,nce and poor applicability of existing evidence to the AI’s intended clinical use. Lower uncertainty equates to higher confidence, which may be associated with large sample sizes, more robust study designs, and more clinically applicable data.

Based onthee three above stated factors, we can evaluate the product’s usability and propose it as a minimal-risk, moderate-risk, or high-risk. Selective deployment of the AI model is also a good idea, where a process review can be done, and potential outcomes can be evaluated before the widespread useof the system.

There should be a process of approval from the research ethics committee (REC) for the experimental and innovative uses of AI. In this process, clinicians should provide information related to technical assessment, implementation feasibility, including strategies to mitigate potential failure modes, and risk evaluation with potential risks and expected harms. Human-in-the-loop processes are also needed to ensure human oversight of the AI’s outputsisd also addressed. In contrast, the standard use of AI could be implemented without the review of the REC. But this needs to determine what the standard use of AI is. There are more factors that need to be considered at this stage for the correct implementation that involves clinical evaluation of AI-based decision support systems. A linear process of AI evaluation based on a system’s development state that adds a third dimension under AI’s risk category that complements the existing regulatory frameworks for AI governance and supports the smooth transition from regulatory approval to successful implementation into clinical practice.

Discussion