Abstract

Artificial Intelligence AI with its widespread use in various sectors, has also dived into healthcare by transforming clinical practice and improving patient care. With the integration of AI in healthcare also come significant ethical challenges that need to be examined, namely justice and fairness, transparency, patient consent and confidentiality, accountability, and patient-centered and equitable care. These concerns are particularly pressing in the collaboration of health care and AI systems because they have the potential to reinforce existing biases in the AI launch. Biases that are based on non-representative datasets and opaque model development processes need to be ironed out in the AI implementation, making it a fairer, more equitable tool for everyone to benefit from. Tocarry outt this task, a continuous ethical framework and collaboration between hospital administration, clinicians, AI developers, and ethicists is necessary. It is crucial that some groups need to be educated to make AI a success in healthcare and for patient care. The resistance needs to be addressed if it is surfacing because of cognitive dissonance aand/andrtor partials and lack of training and innovative mindsets. In this article, I have hhighlightedsome of the reasons that need to be addressed and resolved to achieve a fairer and more inclusive AI implementation in healthcare. The core ethical concerns, along with evolving strategies with their right and just application, can enhance both the clinical value and the trustworthiness of AI systems among patients and healthcare professionals, ensuring that these technologies serve everyone equitably.

Introduction and Motivation

Since AI has made remarkable progress, primarily due to advancements in deep neural networks, also known as deep learning, AI models have been considered for use in adjacent domains, such as biomedicine and healthcare. Up until recently, creating AI systems to assist pathologists, radiologists, and other imaging professionals required labor-intensive engineering but after many years of development recent advances in AI has merged feature engineering with deep learning from large sets of training data which means large complex data is much easily recognized now and is on the path to making patient care more efficient and accurate but accompanied by ethical challenges to be continuously resolved.

With the implementation of AI, medicine is undergoing a rapid transformation, and the medical community is facing pressing concerns regarding the ethical implementation of AI. This article explores widely recognized ethical challenges and proposed strategies for ensuring the ethical integration of AI into clinical practice are discussed below.

Core Ethical Challenges

Justice and Fairness

Justice and fairness in healthcare is important part of fair delivery of AI guidance that should take into consideration “distributive justice” that is concerned with the perceived fairness of outcomes and allocations keeping equality, equity and need in mind and “procedural justice” is the perceived fairness of the processes and procedures and equitable distribution of medical resources and unbiased decision-making keeping in mind the disadvantage that can bring to certain patient groups if such processes and procedures are not adopted correctly. Biases can lead to misdiagnosis in marginalized population causing more issues related to discrimination and equality, and unequal concentration of power and resources in the hands of some hospitals would drive an imbalance of power futhering the issues around bias. Hence, ethical solutions are a must and will demand government support that can regulate and administer the use of AI for equitable usage within the healthcare system.

Trustworthiness

To ensure that models are safe, reliable, and fair across diverse patient populations, the trustworthiness of the AI systems in healthcare has to be grounded in reliability, transparency, explainability, and interpretability across diverse patient populations. For this, the models must be well-trained with a representative dataset. The bigger the data, the more questionable the trustworthiness can be. For example, rare health conditions lack extensive amounts of data, which makes the software less trustworthy in its results and explanations. The understanding of the AI decision must be understood by all interested parties in healthcare to enhance trustworthiness.

Patient Consent and Confidentiality

Patient consent and confidentiality are about upholding patient autonomy by allowing individuals to have control over their health data, and without strong consent mechanisms, confidentiality can be compromised by unauthorized access. AI-driven healthcare risks violating privacy, undermining trust, and weakening ethical standards; ds thus, patient consent and confidentiality are of utmost importance in AI models in protecting the privacy of the patient. Otherwise, it will infringe on confidential and personal patient data and sit on the crossroads with legal implications for the clinicians, hospitals, and other involved parties.

Accountability

Since AI involves multiple stakeholders, including developers, providers, and institutions, there is potential increaseino patient safety risk without clear accountability. Accountability can become more ccomplicated with errors or unsafe recommendations when AI systems are opaque and not easily tracked and interactions between AI model developers, organizational leaders, and healthcare providers creates a risk in who will take responsibility for the errors and will the internal administration handle such nuances - policy revisions, enactment of new processes or something more opaque to push the problem away for as long as possible while causing more issues around patient safety and equity.

Patient-Centered and Equitable Care

In order to align with patient-centered care, AI tools must be adaptable and consistent with individual patient needs and preferences. This requires recommendations to generate insights that allow clinicians to interpret the output according to the patient's unique needs and circumstances.

Patient-centered and equitable care is an aspect that is highly functional and irreplaceable, and can be enhanced by the use of AI. It frees the emotional and personal aspects of patient care that are in alliance with human empathy and sound judgment.

Evolving Strategies to Resolve Ethical Challenges

Multiscale ethics

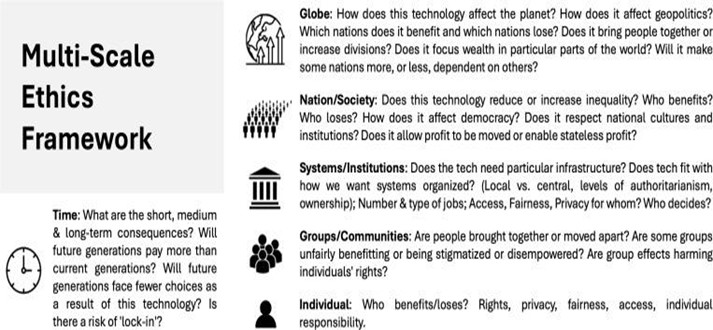

In this aspect, an AI framework is defined as a “socio-technical” system that lacks a structured approach to contextualizing the risks and benefits. While the framework helps identify risk patterns and anticipate recurring issues that are only technical in nature, it provides an opportunity for an inlet that can then incorporate patient perspective and a clinician’s expert opinion for better patient care and make it a better clinician-patient interaction as opposed to AI generated report to be discussed. This approach will definitely require hospitals to help create an interactive framework alongside AI, which will also free up clinicians from administrative task with the time better utilized in discussing patient care in a limited time of the visit. This is a two-factor approach where technicalities point to the issues to be fixed, and identified patterns help incorporate patient perspective for more individualized care, keeping privacy, autonomy, attention,n and transparency in pla,ce and allowing for inclusive decision-making and ensuring that ethical concerns are addressed.

Figure 1 Adapted from “Multi-Scale Ethics—Why We Need to Consider the Ethics of AI in Healthcare at Different Scales,” M. Smallman, 2022. Science and Engineering Ethics, 28(6), 63.

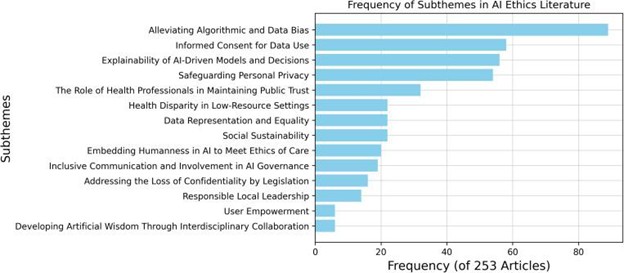

“SHIFT” acronym for standardization - Sustainability, Human Centeredness, Inclusiveness, Fairness, Transparency (SHIFT). Fig 2.

AI implementation in healthcare.

A thematic analysis from Figure 2 (above) of recent literature review identified key subthemes in AI ethics across 253 articles, with their corresponding frequencies: responsible local leadership (14), social sustainability (22), embedding humanness in AI (20), the role of health professionals in public trust (32), interdisciplinary collaboration for artificial wisdom (6), inclusive AI governance (19), mitigating algorithmic and data bias (89), data representation and equality (22), health disparities in low-resource settings (22), privacy protection (54), explainability of AI models (56), legislative safeguards for confidentiality (16), user empowerment (6), and informed consent for data use shows that algorithmic bias, informed consent, explainability, and privacy emerge as the most prevalent concerns in responsible AI implementation in healthcare. This validates the points made in preceding sections regarding the protection of patient autonomy, confidentiality, and patient perspective in an AI-driven healthcare model for more accurate and equitable outcomes in patient care and hospital administration of ethical processes in making AI a successful experience for all. SHIFT helps visualize the balance required in AI usability in particular areas and establish consensus on key AI challenges that protect initiatives for patients and communities, and educate stakeholders on AI applications in medicine.

One of the potential solutions to overcome the SHIFT-generated discrepancy is incorporating uncertainty measures into models, allowing providers to assess the reliability of AI-generated recommendations. It’s called algorithmic vigilance.

Since bias can emerge at any stage of development, algorithmovigilance just like pharmacovigilance, emphasizes continuous evaluation of AI algorithms to address bias to ensure fairness.

In the above discussed facts, figures,s and literature review of the use of AI in healthcare in terms of ethical challenges and potential evolving resolution the following questions arise that put AI in healthcare in a critical spotlight and also in a place where it provides a paradigm study to look deeper into the structure, pattern, and potential incorporation of procedural and operational implementation. Let’s take a look at some of these questions and how they have the potential to unfold, and for all to see subsequent pending development of AI with better insights, integration, and ethical implementation.

1. Is the development of healthcare AI fair and not biased?

This question points back to the prior discussion in this article: Is the implementation ethically challenged and or ethically sound? With the rapid advancement of AI in healthcare, significant challenges are presented in maintaining ethical standards and regulatory oversight. The key concerns include looping the pattern in a way that maintains and sustains the humanistic approach to patient care. The concern for fairness, transparency, consent, accountability, and equitable care needs to be addressed, but many of these issues often come through the implementation of AI. The whole idea is to free up time for patient and clinician to have a better rapport, and with this advanced technology, some glitches will need to show up to be addressed for better outcomes, more impactful treatment discussions, and more input from the patient as well. The lack of standardization in industry regulations and review processes makes this a challenging process, which makes bias a critical concern, but not because of AI. Underscoring the improved use of AI impacts diverse representation in data collection, care of marginalized group and delivery of equitable healthcare. So, is AI fair? It wants to be and not biased, most certainly not if the technology is given the room to grow and iron out glitches instead of tackling with closed end approach.

2. Is the deployment of healthcare AI patient-centered?

Following up from the previous insight to the first question, in response to the second inquiry, while rigorous & necessary data protections safeguard confidentiality, they also restrict data availability, complicating the development of robust and unbiased AI models. It may look like AI is not patient-centered, but contrary to the analysis, AI continues to work on being patient-centered. AI lacksan ethical standardized approach, protocols, and framework that carries its impact at multiple levels. The American Medical Association (AMA) committed in 2023 have founded policies addressing the unforeseen conflicts in AI-driven healthcare. The Multi-Scale Ethics model highlights the need for a broader perspective on bias mitigation and responsible AI use. Focus on strengthening such protocols as set forth by AMA and Multi-Scale Ethics for responsible bias monitoring that reduces disparities, are crucial step toward ethical AI implementation, making it patient-centered. But this implementation on the other end is met by the regulatory framework that requires resubmission processes,s discouraging rigorous ethical reviews, leaving the entire process in the loop,p and passing the buck. Additionally, high validation costs, including dataset preparation, interdisciplinary expertise, and regulatory compliance, create barriers to responsible innovation without bias. It is a matter of questioning the process. While AI, through continued reinvention and because of much-needed glitches, wants to correct the biases and make it a fair and equitable experience, it is also doing the job right, patient-centered, with whatever input it’s given and the hurdles it constantly needs to overcome.

If healthcare AI is ethical, are the organization's policies revised to ensure ethical implementation? Are the experts being asked to help launch AI in a fully integrated manner?

Despite advancements, there is no existing framework and committed groups on the business side of healthcare that are fully committed to ensuring seamless launch and ethical AI implementation in healthcare. A major challenge is the lack of interdisciplinary collaboration between AI and human expertise right now, while ethics is often perceived as a set of static guidelines,s as opposed to the fact that ethics is an ongoing process of moral decision-making, both in the AI and human expert realm. It is the same perspective to be shared and implemented on both sides. How is this possible? This requires organizations to implement and integrate AI fully, willingly engage in regular discussions on AI ethics, receive and integrate input from clinicians, researchers, healthcare ethicists, and developers. Until organizations and hospitals are on board with this process, shifting blame to AI is not a feasible approach. The technology is there to help, but ethical implementation is missing because those who can launch the platform are not fully integrated in the sphere themselves, which makes it look like a non-patient-centered approach by AI.

Conclusion

The openness to AI implementation is still in works and until that process is solidified, ed filling the gap will take time. People in healthcare are not receptive to this enhancement as one would expect. Change takes time for everyone to adjust and adapt to. With no formal structure in place, it can be clearly seen why there are challenges to the implementation of this platform. AI integration should be approached with optimism as it has the capacity and the potential to enhance healthcare expertise and informed patient care. Policies need to be redefined and implemented soonrather er than later. I have brought up some of the concerns to be addressed and possible solutions to those issues in this article. There needs to be a more standardized approach and input to make AI more seamless, which makes implementation, process, and delivery of services more equitable, fair,r thanbiaseds. AI has the potential to make automation of repetitive tasks more aligned, accurate,t e and seamless, ess which will free up healthcare professionals to focus more on patient care and discussing diagnosis along with AI’s input.

A disconnect persists between all experts due to la ack of insight on the part of the organizations, clinicians,ciansthe with launch of AI, and the time it takes to fully develop and integrate the technology,y with so much resistance. It is letting go of the old approach to make way for an enhanced approach that is taking enormous time and creating a gap before the proper implementation of AI. The understanding to be gained by the hospitals and clinicians, and cross-questioning of the AI integration and pending development,t is causing issues with the implementation of AI. AI in healthcare needs the space and insights to continuously develop and integrate for improved outcomes.

Discussion