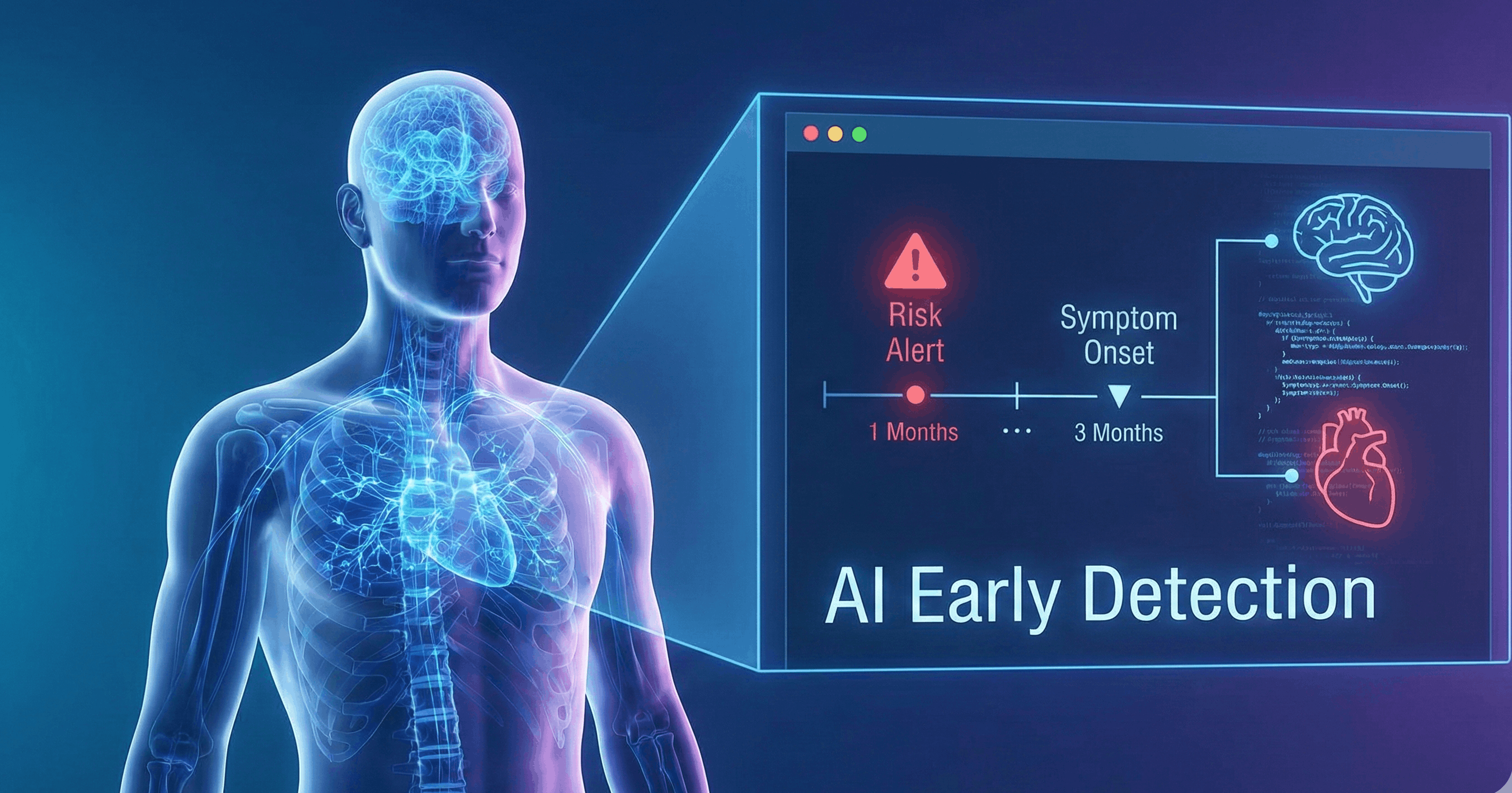

Artificial Intelligence(AI) is reshaping the future of healthcare. The US and UK healthcare systems started to adopt tools like AI Scribe and conversational chatbots, as they claimed to outperform clinicians in diagnostics. Survey data from over 100 physicians polled by Fierce Healthcare and Sermo indicate that many doctors are using general-purpose LLMs for clinical tasks. Among respondents who use LLMs, 76% reported using them in clinical decision-making; over 60% check drug interactions; more than half use them for diagnostic support; nearly half generate clinical documentation; and 70% use LLMs for patient education.

While these technologies promise to support healthcare providers in informed decision-making that enhances efficiency, they pose a significant risk when used without training and oversight.

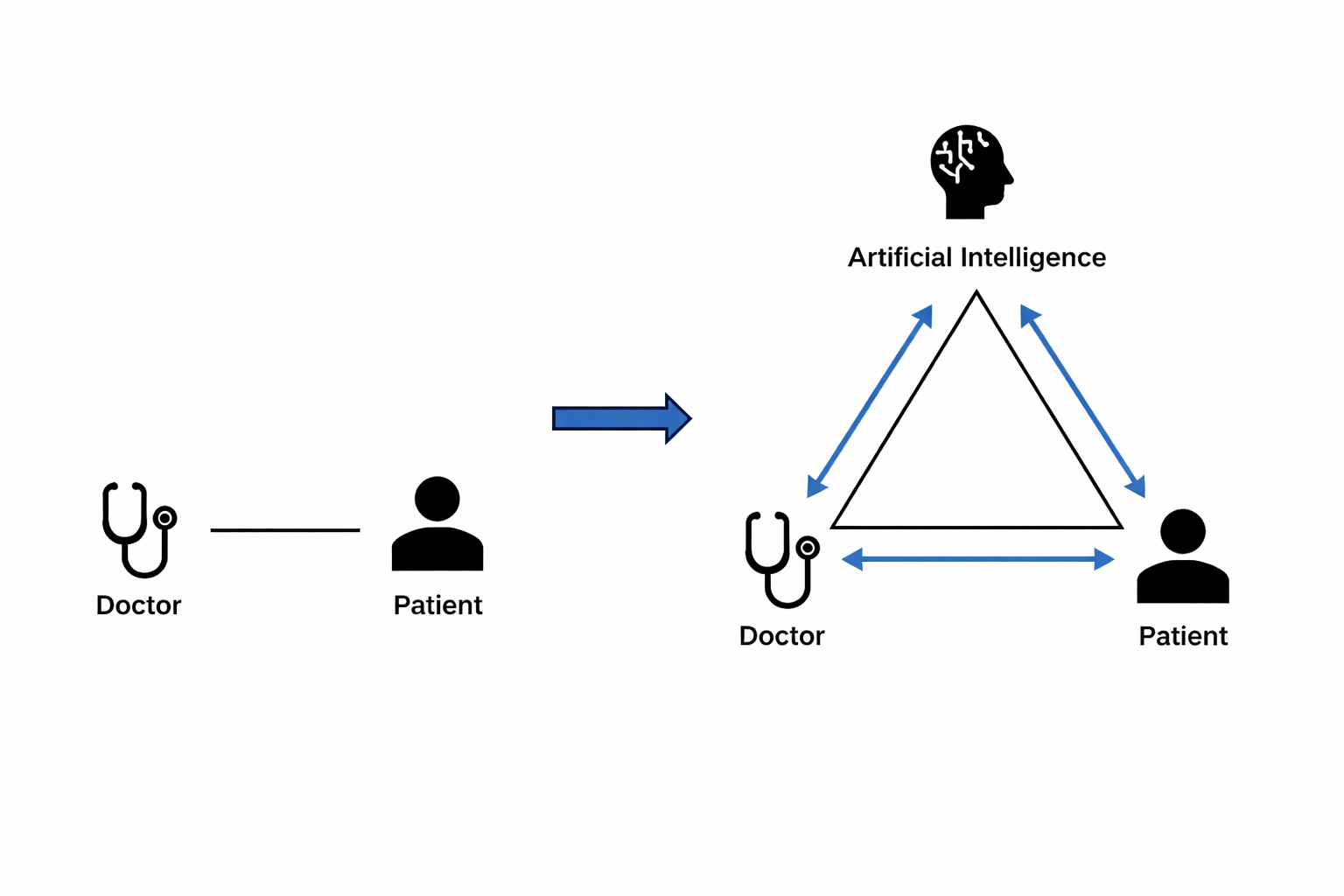

As AI becomes integrated into the clinical workflow, the traditional clinician-patient dyad relationship evolves into a triadic relationship that involves the clinician, patient, and generative AI. Three core competency domains were identified to ensure the safe and ethical use of AI in patient care: AI literacy, critical integration, and effective communication with patients.

AI literacy: Recognising Capabilities and Limitations

AI literacy is the foundation of sensible use of generative AI in healthcare. Clinicians must be trained to assess the scientific rigor of AI output. The risks associated with AI-generated health information are: factual error, omission, or fabricated elements, which are commonly referred to as “AI hallucination”.

Another crucial aspect of AI literacy is an understanding of AI’s “jagged frontier,” which is uneven performance based on the complexity of the task. It might draft a suitable referral documentation, but failed to suggest appropriate medical dosages. Therefore, the clinician must critically evaluate the AI output, rather than relying solely on it based on past performance.

Training in effective communication with the AI system is also required, which involves specific prompting skills. A well-formulated, detailed instruction significantly improves the accuracy of AI’s output. Therefore, effective prompt-writing skills are essential for the responsible use of generative AI.

Clinical Validation: Balancing AI support with Clinical Judgement

While AI literacy emphasizes understanding of the AI system, the clinical validation focuses on the use of AI output in patient care. The key risk of over-reliance on AI is automation bias, which can impact clinicians' cognitive skills due to time constraints and workload. Over time, it can lead to skill erosion, which adversely affects clinical reasoning and reflective skills. To ensure competency, clinicians should be involved in peer discussions and manage cases with traditional practice periodically.

Documentation of AI’s information is another element of critical integration and validation that supports informed decision-making in patient care. Reporting why AI advice is accepted or rejected enhances transparency and enables organisations to identify errors and enhance AI performance.

Ethical communication

AI should be presented as an option to assist healthcare professionals. Clear communication is essential to inform patients about the limitations of current tools, especially in data handling, and the opt-out choice that may raise ethical and legal issues.

Transparency is another crucial aspect if AI is involved in diagnosis, documentation, and research. The patient should be well-informed about the use of AI and its various parameters, such as risk scores and probabilities, in an easy–to–understand way.

Conclusion

AI integration shifts the traditional doctor-patient dyad to a tri-dimensional mode of care. Clinicians need to develop AI literacy, critical reasoning, and transparency to ensure effective and safe use of AI in healthcare. Developing these skills requires systematic education across undergraduate, postgraduate, and continuing professional training. With a systematic approach, AI can enhance the foundation of human medicine.

Discussion